Just a few days after I set up my k3s cluster with an external data source, I found this article High Availability Embedded etcd on k3s’ official website. Since the external database was the single point of failure in my previous setup, I was excited to try this out.

This time, in addition to setting up the cluster itself, I also set up metal-lb for load balancing and cert-manager for enabling HTTPS. So this is basically everything I’ve done to serve a highly available HTTPS website on several vanilla Debian 12 VMs.

As in the title, I’m using SSH remote port forwarding to expose my home network to the internet. This is not typical, but is ideal if you

- don’t have control over your router

- don’t have a dedicated public IP for your household

References

Set up the nodes

First things first, let’s set up our master nodes.

To be able to communicate between nodes (for all masters and workers), we need to generate a token to pass to all nodes. In my case I used openssl to generate one.

|

|

The output should look like this:

|

|

Don’t worry about the linebreak, and just use the entire string as token.

Once the token is ready, we can use it with the installation script plus a few options to install and launch k3s on the first master node.

|

|

Options

--cluster-inittells k3s to use embeddedetcdas data source--node-ip: specify the IP address to use for inter-node communication (my VM has multiple IPs) If your master node only has one IP, you can safely omit the--node-ipoption.

After the script finishes, confirm the node is up and running by

|

|

which should output something like this:

|

|

If it looks good, we can proceed to join the second master node to the cluster.

|

|

And join the third master node.

|

|

Confirm the results by running on any node:

|

|

If all three nodes show up, we now have a 3-node k3s cluster. 🥳

|

|

The number of master nodes should be an odd number, as stated in the official guide

If you have a non-standard network setup, take some time to verify that the kubectl command works on all master nodes, and also make sure to check the pods in kube-system cluster are running properly.

|

|

Now that everything looks good, we can join our worker nodes to the cluster. To do that, just run the following command on all nodes that you want to configure as worker nodes:

|

|

FYI: you can use any of the three master nodes here.

Confirm that the worker nodes have joined successfully with sudo kubectl get node

|

|

Node name refers to the hostname of each node. To change it, just change the hostname.

Control k3s remotely

The kubectl command doesn’t have to be ran from the master nodes. To control k3s from another machine, all we have to do is to install kubectl package and put the .kube/config file in place.

I’m using Arch (btw) on my primary PC, so I’ll just do

|

|

For debian-based distros, do

|

|

for other distros/OSes, refer https://kubernetes.io/docs/tasks/tools/

By default, kubectl assumes the control plane runs on localhost, so we have to point it to one of the master nodes. To do that, go to one of the master nodes and do

|

|

This will allow you to run kubectl without sudo on that master node. Confirm it’s working, and then copy the config file to the machine you want to control k3s from. Example (on my primary PC):

|

|

Now you should be able to run kubectl directly from your workstation.

From this point I will use the command kc a lot, which I’ve aliased to kubectl. Feel free to do the same.

Install helm charts

Helm is a package manager for kubernetes add-ons. Here is how to install it on debian-based distros:

|

|

Refer to https://helm.sh/docs/intro/install/ for details.

Using Helm, we want to install two things:

metal-lb: “a load-balancer implementation for bare metal Kubernetes clusters”, to set up load balancing without any cloud provider solutionscert-manager: “X.509 certificate management for Kubernetes and OpenShift”, to manage obtaining and renewing SSL certificates for us

Let’s start by installing metallb.

|

|

This creates a namespace called metallb-system, and deploy pods to all nodes for handling load balancing. Example:

|

|

For cert-manager, start by defining resource type certificate by using an official release yml file

|

|

Be sure to update the version (v1.16.1 in the above example) to the latest available one

If installed successfully, you should see no error with this command:

|

|

Then we will install cert-manager with helm:

|

|

Use the same version as above

|

|

This is telling helm not to install CRD (custom resource definition), since we have done it already (the certificate resource type). The video tutorial by Techno Tim explains this in detail.

You should now see three pods in the cert-manager namespace.

|

|

Configure metallb

To use metal-lb as our load balancer, we need to tell it the IP range it can listen on. Ideally this IP range should not be handed out by DHCP. By running it in Layer 2 mode, metal-lb will respond to ARP requests to the specified IPs our nodes, so that the requests to those IPs are routed to the cluster.

|

|

network/metallb-config.yml:

|

|

At this point, we would want to disable the default klipper load balancer, and let metal-lb handle everything. To do that, we need to add an argument to the k3s startup command. Do the following for each master node:

|

|

Under the initial block of comments, add this:

|

|

This will override the original command, and disable klipper after restarting the k3s service.

Restart k3s to reflect changes

|

|

Remember to do this for all of your master nodes.

After the restart, you should now see only one daemon set created by metal-lb

|

|

Also, check the external IP address that is assigned to the traefik service in kube-system namespace. if you don’t like it, you can edit the service.

|

|

Deploy a website

Now that we have our load balancer and certificate manager ready, it’s time to deploy something. Here, I will use my personal website as an example.

First, create a namespace and deploy the pod, service, and ingress

|

|

myself.yml:

|

|

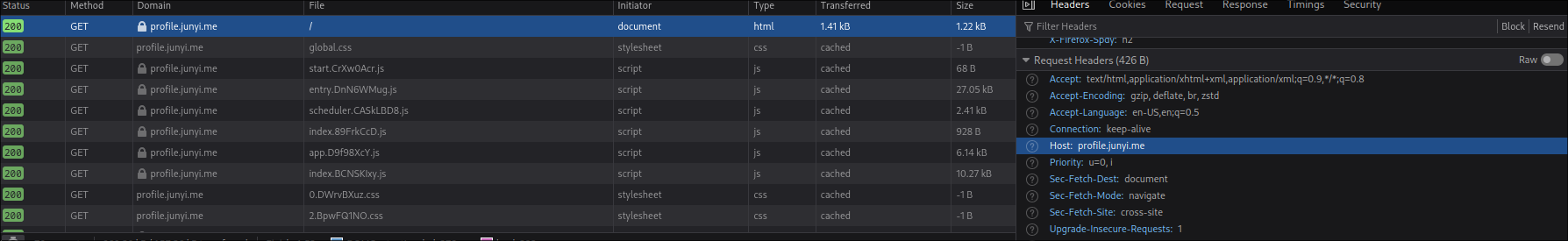

The domains profile.junyi.me and *.junyi.me are my domains that I registered on Cloudflare. Replace them with your own domain.

with the ingress resource configured, traefik will now redirect any traffic to its load balancer external IP to your service, based the value of the Host header, which is automatically added by browsers.

From internet to cluster

Usually, at this point, you would want to point your DNS A record to your home router, and configure a port forwarding from your router to the load balancer IP of traefik service.

However, since I don’t have the control over my router at the place I live, I had to resort to a workaround of using SSH remote port forwarding. It is a bit slow for larger files, but so far it’s working fine for my personal website and this blog.

To set it up, we need two things:

- A VM on the cloud - I’m going with Amazon EC2, but any cloud provider would work

- A stable SSH connection to that VM

First, set up the cloud VM of your choice. Make sure to open ports 80 and 443 on the firewall and the VM itself.

Once that’s done, update/add the DNS A record of your domain to point to the public IP address of the VM.

Then, you can use the image jyking99/ssh-forward to set up a SSH reverse port forward, to route traffic from the publicly available server to your cluster.

Set up port forwarding for HTTP and HTTPS

|

|

ssh-forward.yml:

|

|

Now let’s check if we can access the website. Just open your browser and type in your domain name. Note that we haven’t configured HTTPS yet, so if you use HTTPS, there should be a certificate warning. Aside from that, you should be presented with your website hosted in your own cluster.

Take a moment to admire your beautiful website, and then we’ll proceed to get rid of that warning screen or the broken padlock that’s been bothering you.

Enable HTTPS

To enable HTTPS, we have to supply two things to the cert-manager:

- the email address that you used to register for domain

- an API token that grants permission to edit the domain information (for Cloudflare, here is the guide: https://developers.cloudflare.com/fundamentals/api/get-started/create-token/)

I used Let’s Encrypt to generate my certificate, but feel free to use other certificate authorities.

Once you’ve got the token, toss it in the yml file below, and apply it

|

|

secret-cf-token.yaml:

|

|

Then we’ll tell cert-manager about our certificate authority (Let’s Encrypt)

|

|

letsencrypt-staging.yml:

|

|

Then in the namespace of the website, apply the following to start a DNS-01 challenge

|

|

your-domain-production.yml:

|

|

This might take some time, since cert-manager has to wait for the DNS record to propagate. You can follow the log of cert-manager to see status.

|

|

It will complain like this for a while, and finally, when you see a log like this, the DNS challenge has succeeded

|

|

This has to be done every time you want to deploy a web service in a new namespace, since the generated certificate is only visible in one namespace. Ingress resources in the same namespace can share the same certificate.

With all of these done, you should now be able to access your domain in your browser without any warning. Moment of truth…

If everything worked, congratulations! 🥳🥳🥳 You have successfully self-hosted a website.

Conclusion

As a software engineer, I had written quite a lot of code for web services, including front-end, back-end, CLI runners, and so on. But deploying them to test environment and production environment were rarely my job (especially production), and a lot of it seemed like magic to me. Setting up this home lab environment was a step towards demystifying that process, and I feel like I’m learning a lot just by following tutorials and resolving the issues I encountered along the way.

At the moment, I have some thoughts on improving this setup, for example:

- Set up DDNS on the cloud VM, in case the public IP changes

- Set up a DNS failover for the master nodes, so that if one node goes down, I can automatically use the remaining ones for

kubectl

but these are for another time.